Michael Gleicher

David DeWitt Professor

Associate Chair for Undergraduate Programs

Department of Computer Sciences

University of Wisconsin, Madison

1205 University Ave

Madison, WI 53706, USAgleicher@cs.wisc.edu

Office: 6588 Mortgridge Hall (the new CS building!)

Office Hour: Summer 2026 - by appointment only

I am a professor working in areas related to Visual Computing. My research these days is mainly about robotics and data visualization. With both, I am interested in how we can make them useful for people. I remain interested in animation, virtual reality, multimedia, …

I have been recognized as the David DeWitt Professor of Computer Sciences, an ACM Fellow, a member of the IEEE Visualization Academy, and an IEEE Senior Member. I also hold a concurrent position as an Amazon Design ScholarWhenever I mention that, I am supposed to say “This work is not connected to Amazon.com”. .

A brief biography will tell you how I got here. You can see a reasonably current CV, but you probably are looking for papers, talks, videos or advice.

Teaching: CS559 Spring 2026

I have some pages with various Advice I generally give to students. This includes the format for status reports, what I’d like to see in Prelims and Theses, my grad school FAQ, or my advice on how to give a talk.

You might be interested in my grad school FAQ. Come and talk to me if you’re interested in data visualization, robotics, computer graphics or related topics. If you are an undergrad and looking to work on a project, please see Undergrad Research, Projects and Directed Studies. If you are asking about a reference letter, please see Reference Letters for Students in Classes.

If you’re interested in joining our group, come talk to me! If you aren’t a student at Wisconsin yet, please look at my grad school FAQ, particularly the last few questions.

Current Research Themes

The projects list was more than slightly out of date. I need to revitalize it. But, there are several things going on with robotics (tele-operation, providing awareness to remote users, using novel sensors, …) and visualization (summarization, text collection exploration, uncertainty, …).

Selected Past (but recent) Themes

Teaching

These are the main classes I teach. You can see more on the Graphics Group Courses Page.

CS765 Visualization: This class is taught regularly. I taught it in Fall 2025). In the past, I taught it Fall 2024, Fall 2022, Fall 2021, Fall 2020, Fall 2019, Fall 2018, Fall 2017, Spring 2018 and several times before that as “special topics” experiments.

CS559 Computer Graphics: In Spring of 2025 I taught an Accelerated Honors Section, and supporting Dr. Young Wu who is teaching the regular section. I also taught this in Spring 2023, Spring 2022, Spring 2021, Spring 2020. and Spring 2019. There are lots of previous offereings going back to 1999. (I’ve tried to recreate the web pages)

Older classes are so old they’ve been removed from the catalog!

- CS777: Computer Animation is a graduate level CS class for people with some graphics background. This taught was taught regularly in the past (2013, 2011, 2006, 2004, 2003). It kindof died off from lack of interest (student interest and my interest)

- CS679 Computer Games Technologies: this class was popular, so I tried to teach it regularly for several years 2012, 2011, 2010).

- Advanced Graphics: In the Spring of 2009, I taught an Advanced Graphics class.

You can find other information on graphics group classes on the Graphics Group Courses Page.

Selected Recent Publications

A (pretty) complete list is available here. Here are some selected recent ones:

Robotics/Sensing

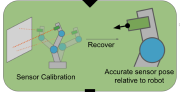

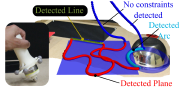

- RAL ‘24 (ICRA ‘25) Using a Distance Sensor to Detect Deviations in a Planar Surface w/Sifferman, Sun and Gupta

- RAL ‘24 (ICRA ‘25): Motion Comparator: Visual Comparison of Robot Motions w/Wang, Pesekis and Jiang - Visualization applied to robotics!

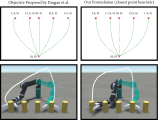

- ICRA ‘24: IKLink: End-Effector Trajectory Tracking with Minimal Reconfigurations w/Wang and Sifferman

- CVPR ‘24: Towards 3D Vision with Low-Cost Single-Photon Cameras w/ Mu, Sifferman, et al.

Visualization

- Arxiv ‘25: Augmenting a Large Language Model with a Combination of Text and Visual Data for Conversational Visualization of Global Geospatial Data w/Mena, Kouyoumdjian, Viola and Ynnerman

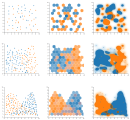

- TVCG ‘25 (Vis ‘24): Beware of Validation by Eye: Visual Validation of Linear Trends in Scatterplots w/ Baum, Chang, and von Landesberger

- Arxiv ‘24: Enhancing Text Corpus Exploration with Post Hoc Explanations and Comparative Design w/Bai and Leppanan - This is the AbstractsViewer paper (yes, you can try the demo).